In recent years, artificial intelligence (AI) language models have become ubiquitous tools in generating human-like text, powering chatbots, and automating content creation across various domains. Fascinatingly, these advances have spilled over into the realm of biology, where researchers are harnessing language models to decipher the complex information encoded in DNA and proteins. By conceptualizing biological sequences as a form of language, these models analyze patterns and relationships within the vast diversity of biomolecules, accelerating predictions and providing fresh insights into the intricacies of life’s molecular machinery. Despite their promise, a critical challenge has persisted—determining the reliability and confidence of the predictions generated by these models remains elusive.

Addressing this significant gap, computational biologists at Emory University have introduced an innovative approach that sets out to quantify the trustworthiness of protein language model embeddings. Published in Nature Methods, their novel framework evaluates the quality of the model’s internal representations by contrasting embeddings of natural proteins with those generated from synthetic, random sequences. This comparative strategy enables researchers to discern how confidently the model distinguishes biologically meaningful signals from noise, marking a transformative step in understanding and validating AI-driven biological inferences.

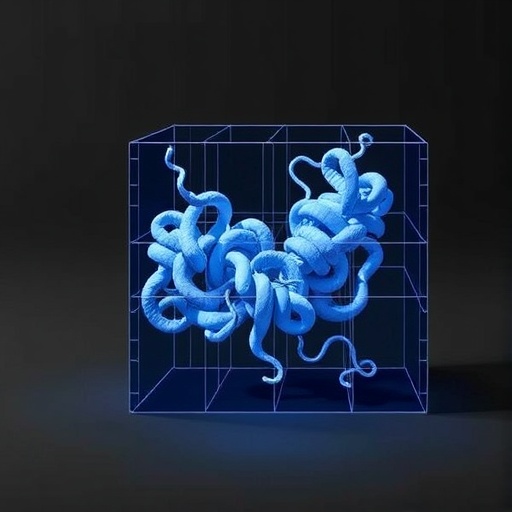

Embeddings refer to the numerical representation language models assign to data, condensing complex inputs into abstract vectors within a latent space where proximity implies similarity. In protein language models, this latent space metaphorically catalogues protein sequences based on structural and functional features discerned during training. Emory’s team visualized this latent space as a scatter plot, observing that natural proteins cluster according to their evolutionary and functional subtypes, while synthetic sequences, devoid of biological relevance, occupy distinctly separate regions. They coined this latter region the “junkyard,” positing it as a repository of low-quality embeddings that reflect the model’s unfamiliarity with non-biological sequences.

Central to their methodology is the concept of a “random neighbor score,” a metric that quantifies the proximity between a given protein’s embedding and those of synthetic, random sequences within the latent space. A low score indicates few or no synthetic neighbors nearby, suggesting the model’s high confidence in the biological validity of that protein’s embedding. Conversely, a high score implies the embedding is closer to the “junkyard,” signaling uncertainty or diminished reliability. This quantitative measure provides a computationally efficient and biologically grounded proxy for evaluating the model’s predictive certainty across diverse proteins and subsequences.

The implications of this development reach far beyond theoretical considerations. Protein sequences, encoded by DNA, fold into intricate three-dimensional structures that underpin nearly all cellular processes—from catalysis and signaling to defense mechanisms. With over 200 million protein sequences cataloged in databases such as UniProt, language models have a substantial foundation for training. Still, the true diversity of proteins extends into the trillions, much of it residing in the enigmatic microbial world that community metagenomes represent. Emory’s framework offers a vital means to assess whether inferences drawn from limited sample sets can generalize reliably to this vast, largely uncharted biosphere.

The endeavor to understand metagenomic complexity is vital because microorganisms do not exist in isolation; they form dynamic communities that profoundly impact host health and ecosystem functions. Characterizing the proteins encoded by these communities uncovers biochemical pathways and interactions that conventional experimental methods struggle to elucidate at scale. By sharpening the lens through which AI models interpret protein sequences, the newly introduced confidence metric enhances the promise of computational biology to unlock unprecedented biological insights.

This advancement also illuminates the intricate process of evolution etched into protein sequences. Evolution conserves amino acid residues essential for a protein’s function, imprinting a signature that language models learn to recognize during training. Natural proteins, therefore, exhibit coherent embedding patterns, reflective of their functional and structural constraints shaped over billions of years. Synthetic random sequences, bereft of adaptive significance, lack these signatures and cluster apart in embedding space. By leveraging this evolutionary contrast, the Emory team has devised a method to “peer inside” the black box of AI models, exposing the hallmark features that guide their predictions.

Further validation demonstrated that embeddings flagged as low-quality or uncertain by the random neighbor score tended to perform poorly in downstream biological tasks. These included function prediction, structural modeling, and interaction inference—core applications where misinterpretation can misguide research efforts. Therefore, employing this uncertainty measure not only improves model interpretability but also safeguards the fidelity of scientific conclusions derived from AI predictions, fostering greater trust in computational methodologies.

The simplicity and elegance of the approach belie its broad applicability across the burgeoning landscape of biological language models. As new architectures and training paradigms emerge, providing an intrinsic metric for embedding quality becomes crucial for optimizing model design and application. The concept of a biologically anchored uncertainty score represents a paradigm shift, moving away from generic metrics borrowed from computer science toward domain-specific criteria that align more closely with empirical biological evidence.

Just as a surgeon relies on the sharpest instruments to maximize precision and minimize risk, computational biologists can now choose and refine AI models with enhanced awareness of their limitations and strengths. This precision becomes exceptionally vital when extrapolating to the unknown proteomic “dark matter” found in environmental and clinical microbiomes, where experimental validation lags and computational predictions hold the key to discovery.

By fostering heightened quality control at every stage of protein data modeling—from sequence input through embedding to downstream prediction—this method mitigates the compounding of errors that can arise when working with noisy or unrepresentative datasets. Accurate uncertainty quantification is paramount in a field where even slight errors can propagate through complex biological networks, leading to misleading interpretations or missed opportunities.

This pioneering research was supported by the National Science Foundation and represents a milestone in integrating AI reliability with molecular biology. The work emboldens the interface between computational simulation and empirical biology, highlighting the immense potential of language models while tempering enthusiasm with rigorous validation. As AI-driven biology continues to evolve, transparency and confidence measures like the random neighbor score will shape the trajectory toward more robust and insightful discoveries.

Subject of Research: Not applicable

Article Title: Quantifying uncertainty in protein representations across models and tasks

News Publication Date: 1-Apr-2026

Web References: DOI link

Image Credits: Bromberg lab

Keywords

Bioinformatics, Sequence analysis, Research methods, Complex networks, Computers, Metagenomics, Protein functions