As artificial intelligence (AI) technologies continue to advance at a rapid pace, their integration into academic writing has become both ubiquitous and transformative. The emergence of generative AI tools has shifted the paradigm of how students approach drafting essays, research papers, and other written assignments. Rather than questioning whether AI is involved in student work, educators now grapple with understanding precisely how AI is used throughout the writing process. This shift calls for not only detection but deeper insight into the nuances of AI-driven composition.

In a landscape where nearly 90% of college students reportedly employ AI in their academic tasks, with close to half utilizing it during draft development, the limitations of traditional evaluation frameworks become glaring. Tools like Grammarly and Turnitin, long relied upon to ensure integrity and original thought, fall short in addressing the layered interaction between student creativity and AI assistance. These conventional systems primarily attempt to detect plagiarism or stylistic errors, yet they cannot illuminate the process by which ideas are generated, refined, and altered via AI collaboration.

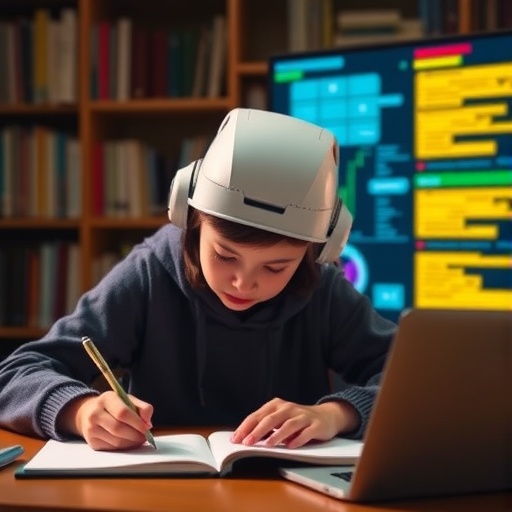

Addressing this critical gap, researchers at Georgia Tech and Stanford have introduced DraftMarks, an innovative open-source platform designed to visualize the intricate interplay between human writers and AI during the composition journey. Unlike tools that simply flag AI-generated content, DraftMarks offers a granular, augmented reading experience. It overlays intuitive visual annotations on textual drafts, revealing when AI was prompted, where it generated content, and how human writers iterated upon AI suggestions. This approach transforms the understanding of student writing from a static artifact into a dynamic narrative of co-creation.

DraftMarks’ visual language cleverly incorporates metaphors from the analog writing process, thereby making the AI collaboration visible and interpretable. For example, ‘eraser crumbs’ indicate sections subjected to heavy revision, signaling thoughtful engagement with prior text. ‘Smudges’ communicate AI-driven refinements that strengthen arguments without altering core content, while ‘masking tape’ highlights passages originally generated by AI. Additional markers, such as ‘glue residue,’ reveal where AI text was subsequently removed, and ‘ghost text’ depicts prompts for AI output that the writer chose not to use. Together, these annotations provide layered context, demystifying the AI’s role in shaping the final document.

This system stands in stark contrast to binary AI detection models, which typically return a percentage score reflecting probable AI involvement but offer little interpretive value. DraftMarks empowers both students and educators to critically reflect upon how AI influences tone, argumentation, and overall authorship intent. By externalizing invisible cognitive processes, it encourages writers to exercise agency in deciding whether to accept, adapt, or reject AI-generated suggestions. This transparency nurtures a more intentional and ethically grounded collaboration with emerging technologies.

The development of DraftMarks was grounded in close collaboration with educators to ensure its design aligns with pedagogical priorities. Researchers conducted ethnographic studies involving 21 instructors to observe how they engage with student writing, seeking cues about revision rigor, learning progression, and originality. Insights from these observations shaped DraftMarks’ interface and visual metaphors, ensuring the tool resonates with the familiar embodied experience of writing and editing. This human-centered design ethos ensures that DraftMarks does not merely detect AI but augments educators’ interpretive capabilities.

Behind the user-facing visuals lies a sophisticated backend system capable of tracking draft histories in near real-time. By continuously monitoring document evolution and classifying different edit types, the platform dynamically updates visual markers to reflect ongoing writer-AI interactions. This temporal dimension provides educators with a “live” window into the writing process, allowing them to assess how students engage with AI iteratively rather than judging static final submissions. Such granularity supports a richer dialogue around learning and collaborative authorship.

To evaluate DraftMarks’ effectiveness beyond the laboratory environment, the research team conducted a comprehensive study involving 70 participants from diverse backgrounds, including students, educators, journalists, and casual readers. Their responses illuminated varying interpretive uses of the tool. Instructors valued the insight into how students navigated AI assistance and exercised critical judgment, which is pivotal for assessing pedagogical outcomes. In contrast, general readers used DraftMarks as a guide for gauging authorial authenticity and trustworthiness, highlighting its broader relevance for transparency in digital-era writing.

The implications of DraftMarks extend beyond academia into the realm of public discourse, where AI-generated texts proliferate rapidly. In an era marked by concerns over misinformation and synthetic media, tools that reveal the generative provenance and editorial shaping of content are vital. DraftMarks’ approach aligns with calls for responsible and transparent AI integration, fostering an environment where readers can critically assess not only what is written but how and why it was composed. This represents a meaningful contribution to digital literacy amid escalating AI adoption.

Far from treating AI as an adversarial force, DraftMarks encourages a nuanced reflection on the evolving nature of authorship. It recognizes that AI can serve as a collaborative partner rather than a mere productivity hack or cheating tool. By visually mapping the negotiation between human creativity and algorithmic suggestion, the software invites writers to deliberate on their ethical and artistic choices. As a result, users reportedly develop heightened awareness of subtle shifts in tone and meaning introduced by AI, underscoring the profound influence even minor AI interventions may exert on expression.

The broader research underscores a paradigm shift in AI literacy, emphasizing transparency and reflection over detection and enforcement. By fostering awareness and dialogue about AI’s role, tools like DraftMarks can redefine writing pedagogy to accommodate hybrid human-AI workflows. This reorientation has the potential to enrich learning, empower students as intentional co-authors, and equip educators with advanced means for assessment. As AI becomes an embedded medium for textual creativity, such innovations represent pivotal steps toward harmonizing technological capabilities with human values.

As the academic community confronts the realities of AI-augmented writing, DraftMarks offers a compelling vision for the future. It transcends simplistic metrics of AI presence and instead reveals a rich tapestry of interaction, revision, and judgment. The tool’s blend of technical sophistication and humanistic design provides a model for integrating AI into education that is transparent, ethically informed, and pedagogically effective. Ultimately, DraftMarks exemplifies how thoughtful technology can illuminate the invisible processes at the heart of creative work in the digital age.

Subject of Research: The development and use of DraftMarks, an AI-visualization tool that reveals the writing process involving human and AI collaboration in academic contexts.

Article Title: Revealing Invisible Collaboration: DraftMarks Illuminates AI-Human Interaction in Student Writing

News Publication Date: April 2024

Web References:

https://copyleaks.com/blog/ai-in-action-2025-student-ai-usage-report

https://mediasvc.eurekalert.org/Api/v1/Multimedia/32ab4af5-694a-47dd-9e60-9fafbfd428c9/Rendition/low-res/Content/Public

Image Credits: Georgia Tech

Keywords: Generative AI, Academic Writing, AI in Education, Draft Visualization, Human-AI Collaboration, Writing Process Transparency, Educational Technology, Authorship Integrity, Computational Linguistics, Digital Literacy, Open-source Tools