The human hand is an extraordinary marvel of biological engineering. Encased within this intricate mechanism are 34 muscles, 27 joints, and over a hundred tendons and ligaments working in seamless harmony to produce the countless gestures we perform daily. Replicating this dexterity has long stood as a formidable challenge in robotic and virtual reality technologies, where precision and real-time responsiveness are paramount. Now, an innovative breakthrough from a team of engineers at MIT offers a transformative new approach: a wearable ultrasound wristband that tracks hand movements with remarkable accuracy, unlocking fresh possibilities for remote control of robotic hands and immersive virtual interactions.

Unlike conventional hand-tracking systems reliant on external cameras or cumbersome sensor gloves, this ultrasound wristband leverages real-time imaging of the wrist’s internal musculature — including muscles, tendons, and ligaments — as the wearer moves their hand. Using a miniaturized ultrasound transducer sticker embedded into a compact wristband roughly the size of a smartwatch, the device continually captures cross-sectional ultrasound images of the wrist structure. This approach bypasses many limitations inherent in optical systems, such as occlusions and constrained fields of view, and avoids the sensory disruptions caused by tight sensor gloves.

The key to translating these ultrasound images into precise hand kinematics is an advanced artificial intelligence algorithm trained expressly to decode subtle muscle and tendon configurations correlating to finger positions and movements. Human fingers possess up to 22 degrees of freedom, enabling an astonishing range of articulation. By training the AI model on meticulously annotated ultrasound datasets generated from simultaneous multi-camera recordings, researchers enabled it to learn which regions of the wrist ultrasound images correspond to distinct degrees of freedom—whether flexion of the thumb or abduction of the index finger. This AI-driven mapping renders continuous, real-time tracking of complex hand gestures possible.

Extensive testing confirmed the wristband’s versatility and robustness across diverse users. The research team fitted eight volunteers with varying hand sizes and morphologies with the device while performing an array of gestures, including fingerspelling all 26 letters of American Sign Language. The wristband consistently tracked and predicted hand configurations with high fidelity, demonstrating its applicability for nuanced, expressive hand movements. The researchers also validated the system’s capability to recognize grasping motions involving common objects such as tennis balls, bottles, scissors, and pencils, underscoring its potential utility in a variety of real-world scenarios.

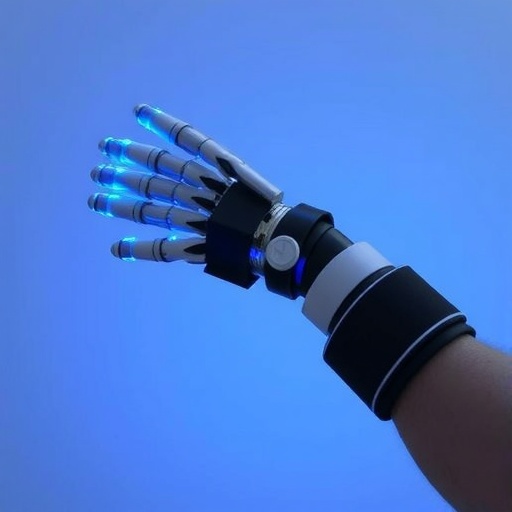

Beyond simple demonstration, the team implemented wireless control setups connecting the wristband to commercial robotic hands and computer interfaces. By mimicking the wearer’s gestures, the robotic hands performed complex coordinated actions like playing a piano keyboard or launching a mini basketball, exhibiting a nearly seamless “marionette-like” control fidelity. In virtual environments, pinching motions translated into zooming and resizing 3D objects, highlighting the technology’s promise for natural, intuitive control in augmented reality (AR) and virtual reality (VR) settings.

From a technical standpoint, the miniaturized ultrasound sticker integrates piezoelectric transducers capable of generating and receiving high-frequency acoustic waves. Unlike typical medical ultrasound machines, these stickers are optimized for wearability, employing soft hydrogels and flexible electronics to maintain skin conformity and signal quality during dynamic wrist motions. The onboard processing unit compresses and wirelessly transmits ultrasound data, facilitating real-time external analysis by the AI engine. This combination of hardware miniaturization, advanced signal processing, and deep learning defines a new frontier in wearable bio-imaging for human-machine interfacing.

Traditional methodologies for capturing hand motion have often fallen short of this level of responsiveness or versatility. Optical camera-based systems require intricate setups that are sensitive to lighting and line-of-sight interruptions. Data gloves, embedded with inertial or flex sensors, compromise natural tactile feedback and impose physical constraints on the wearer. Alternatively, electromyography (EMG) measures electrical signals generated by muscle contractions but suffers from environmental noise and limited resolution in distinguishing fine finger articulations. The adoption of ultrasound imaging circumvents these pitfalls by directly visualizing internal anatomical changes.

The conceptual underpinning equates the wrist tendons and muscles to strings of a puppet, where each ultrasound image captures the real-time tension and positioning of these strings. By continuously monitoring these ‘strings,’ the system infers the pose of each finger and the palm with impressive continuity and granularity. The research embodies a clever fusion of biomechanical insight with cutting-edge imaging technology, paired elegantly with AI to bridge the complexity gap between raw sensor data and meaningful motion interpretation.

Looking toward the future, the MIT team is focused on further miniaturizing the wristband’s components to make the device more convenient and lower power consumption. Expanding the training datasets with a broader demographic base will also enhance recognition accuracy and robustness, potentially enabling universal wearable deployment. Envisioned applications extend across healthcare, where fine motor control data could aid surgical robots; entertainment industries, offering enhanced virtual object manipulation; and teleoperation contexts, facilitating remote dexterous robot control in hazardous environments.

Such developments herald a new paradigm in human-computer and human-robot interaction—where wearability, non-intrusiveness, and precision converge. This wearable ultrasound wristband represents a pivotal advance, moving beyond remote gesture capture toward real-time, nuanced control of machine counterparts that mimic even the subtlest human manual dexterity. As this technology progresses, it promises to redefine how we engage with machines, virtual worlds, and assistive robotics alike.

Xuanhe Zhao, the lead investigator and professor at MIT, emphasizes the system’s potential to replace existing hand-tracking modalities in AR and VR. The wristband’s ability to generate a rich continuous data stream could provide unprecedented control fidelity and immersion. Moreover, the generated datasets could expedite training of humanoid robotic platforms, bestowing them with dexterity previously unattainable without such detailed human movement data. The impact spans industries—from delivering enhanced gaming experiences to advancing robotic surgical assistants with human-like precision.

The comprehensive work is documented in the recent paper titled “Dexterous hand tracking via wearable wrist imaging,” published in the esteemed journal Nature Electronics. This multidisciplinary collaboration unites expertise in mechanical engineering, electrical engineering, computer science, and biophysics from institutions including MIT and the University of Southern California, pushing forward the frontier of wearable robotics and machine learning.

Subject of Research: Wearable hand motion tracking using ultrasound imaging and AI.

Article Title: “Dexterous hand tracking via wearable wrist imaging”

Web References: http://dx.doi.org/10.1038/s41928-026-01594-4

Image Credits: Melanie Gonick, MIT

Keywords

Robotics, Mechanical engineering, Artificial intelligence, Virtual reality, Human-robot interaction, Humanoid robots, Robotic grippers, Biomechanics, Ultrasound, User interfaces, Robot control