Researchers in the field of medical imaging have recently made significant advancements in the quality and accuracy of tumor detection through a new technique utilizing Conditional Generative Adversarial Networks (CGANs). This groundbreaking method, explored by S. Kamiyama, K. Usui, K. Suga, and colleagues, addresses key challenges faced in Sparse Projection Cone-Beam Computed Tomography (CBCT). As reliance on advanced imaging techniques grows in clinical practice, their study published in J. Med. Biol. Eng. offers promising insights that could reshape the future of oncological diagnostics.

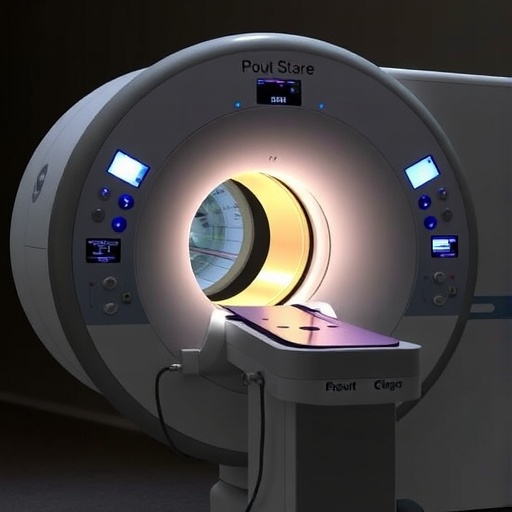

Cone-Beam CT is a revolutionary imaging modality that provides detailed three-dimensional images, significantly impacting the way healthcare professionals visualize and analyze tumors. However, as the demand for imaging data increases, particularly in scenarios where patients have limited exposure due to radiation concerns, Sparse Projection CBCT becomes a critical technique. This method captures fewer projections while still attempting to maintain image quality. The challenge, however, lies in reconciling reduced data availability with image precision and fidelity, which is where the research team directed its focus.

The researchers employed a model that harnesses the potential of CGANs to enhance image quality and correct the shapes of tumors represented in the CBCT images. What sets CGANs apart from traditional imaging techniques is their ability to learn the underlying patterns and distributions within training datasets. The synergy between adversarial networks generates higher quality images by effectively filling gaps in data while preserving important anatomical details.

The application of CGANs in sparse imaging is not merely a technological upgrade; it signifies a paradigm shift in how tumor shape representations can be handled. By training the GAN on a dataset that comprises both low-quality and high-quality images, researchers could generate images that not only appear less noisy but also possess detailed anatomical accuracy. Such precision is vital for clinicians when making life-altering decisions regarding treatment options.

Although the promise of enhanced imaging through CGANs is compelling, comprehensive validation is essential. The researchers engaged in a series of experiments to assess the effectiveness of their approach against traditional methods. They meticulously compared image quality, tumor shape fidelity, and diagnostic accuracy, which involved quantitative analysis markers such as structural similarity index and peak signal-to-noise ratio. The findings revealed that the CGAN-enhanced images significantly outperformed those derived from standard sparse projections.

Breaking down the technical intricacies, one has to consider how the architecture of CGANs specifically contributes to these advancements. The network operates with two main components: a generator and a discriminator. The generator creates images from random noise, while the discriminator evaluates these images against real data for authenticity. Through iterative training, the generator improves its outputs, while the discriminator gets better at distinguishing between real and generated images.

An impressive aspect of this research is its capacity for tumor shape correction. Traditionally, the morphology of tumors could be distorted in sparse projection datasets, leading to inaccuracies in size and boundaries. The study emphasizes that the CGANs proved dexterous in rectifying these discrepancies, allowing for a reconstruction of more accurate tumor shapes. This capability may significantly enhance surgical planning and precision radiation therapy, where understanding the exact dimensions of a tumor is crucial.

Moreover, the implications of this research extend far beyond oncology alone. The underlying principles of using CGANs for image enhancement can be applicable across various domains of medical imaging, including cardiology and neurology. The versatility of these networks illustrates a broader potential that may lead to even greater breakthroughs across the health sciences spectrum.

This innovative research merges rigorous scientific inquiry with the practical reality of patient care, addressing the increasing demand for precise, reliable imaging while mitigating radiation exposure risks. As magnetic resonance imaging and ultrasound methods evolve, the use of CGANs might provide an alternative that is lighter on the patient while maintaining a firm grip on image quality. The integration of artificial intelligence in healthcare imaging is not just a trend; it represents a key solution for the future of diagnostics.

In summary, the integration of Conditional Generative Adversarial Networks into the realm of Sparse Projection Cone-Beam Computed Tomography sets the stage for not only improved tumor detection but also fosters a broader dialogue about the future of AI in medical imaging. Healthcare professionals must stay abreast of these technological advancements, as they could significantly alter treatment approaches and improvement in patient outcomes across the globe.

The study spearheaded by Kamiyama, Usui, Suga, and their team signifies a leap forward, reinforcing the notion that innovation in imaging technologies can provide more than just visual data—it can refine the entire diagnostic process, ushering a new era where every pixel counts in the fight against cancer.

As our understanding of artificial intelligence and imaging technology continues to grow, we may be on the brink of unlocking capabilities that not just retain the quality of medical imaging but approach a future where diagnostics are not only accurate but immersive and profoundly insightful. The quest for excellence in tumor imaging through advanced computational methods illustrates an exciting, transformative period for the medical community as a whole.

In conclusion, the innovative research utilizing Conditional Generative Adversarial Networks showcases how artificial intelligence can bridge gaps in medical imaging, yielding enhanced image quality and tumor shape correction. This advancement stands as a testament to the continuous evolution of diagnostic capabilities, paving the way for more informed clinical decisions and improved patient outcomes.

Subject of Research: Enhancing image quality and tumor shape correction in Sparse Projection Cone-Beam CT using Conditional Generative Adversarial Networks.

Article Title: Image Quality and Tumor Shape Correction in Sparse Projection Cone-Beam CT Using Conditional Generative Adversarial Networks.

Article References: Kamiyama, S., Usui, K., Suga, K. et al. Image Quality and Tumor Shape Correction in Sparse Projection Cone-Beam CT Using Conditional Generative Adversarial Networks. J. Med. Biol. Eng. 45, 138–146 (2025). https://doi.org/10.1007/s40846-025-00927-6

Image Credits: AI Generated

DOI: https://doi.org/10.1007/s40846-025-00927-6

Keywords: Image Quality, Tumor Shape Correction, Sparse Projection, Cone-Beam CT, Conditional Generative Adversarial Networks, Medical Imaging, Oncology, Artificial Intelligence, Diagnostic Accuracy.