In the realms of finance, insurance, and economic inequality, quantiles serve as fundamental tools for evaluating risk and understanding distributional characteristics. Quantiles, unlike simple averages, are notoriously difficult to handle statistically because they are derived as order statistics rather than straightforward means. This inherent complexity poses significant challenges when attempting to build rigorous statistical inference methods around quantile-based estimates. Addressing this long-standing challenge, a groundbreaking study recently published in the journal Risk Sciences presents a comprehensive and innovative nonparametric statistical inference framework for integrals of quantiles, also known as integrated quantiles.

This novel theoretical development pivots on general approximating sequences of cumulative distribution functions (cdfs), a choice that divorces the framework from dependence on specific data structures or sampling methodologies. The brilliance of this approach lies in its versatility, enabling the theory to adapt seamlessly across diverse data configurations without being bound to rigid assumptions about the nature of the observations. This flexibility is not only a conceptual advancement but also a practical enabler, allowing researchers and practitioners working with dependent datasets—such as time series—to apply the framework effectively.

To bridge the gap between abstract theory and tangible applications, the research team demonstrated the utility of their inference theory using the familiar procedure of simple random sampling. This concrete example helps elucidate how the framework can be concretely implemented in standard data scenarios while laying the groundwork for extensions to more complex dependent data settings. Such adaptability is key, given that real-world data frequently exhibit serial or spatial dependence, which can compromise the validity of inference methods grounded in independence assumptions.

A significant hallmark of the framework is its unification of fundamental large-sample arguments that are ubiquitous in classical statistical estimators. This includes, most notably, the analysis of trimmed means—estimators that exclude extreme values to better represent the central tendency. The newly developed theory not only recapitulates existing asymptotic results but also sheds light on why certain conditions and assumptions naturally arise in these results, thereby offering a cohesive explanatory foundation that had been previously fragmented.

Consistent with their comprehensive objective, the authors delve deeply into the properties of the integrated quantiles, thoroughly addressing consistency, bias, and asymptotic normality. These properties are cornerstones of robust statistical estimation. Consistency ensures that as sample size grows, the estimator converges to the true parameter; bias assesses systematic deviations from this truth; and asymptotic normality provides the foundation for constructing confidence intervals and hypothesis tests in large samples. The framework rigorously characterizes these aspects for various quantile integrals and their combinations, illuminating theoretical pathways that underlie risk and economic inequality metrics.

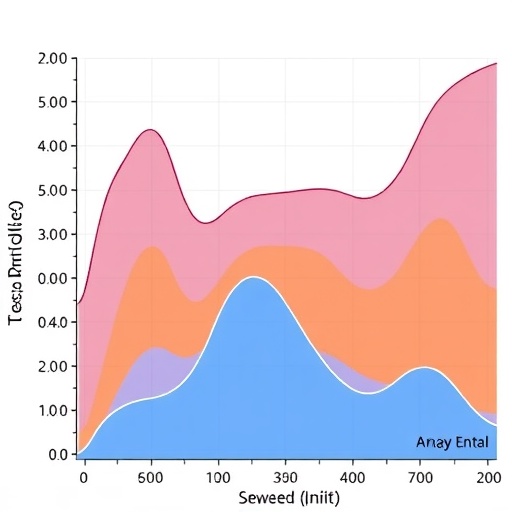

One compelling aspect of the research is its relevance to key risk assessment measures such as the upside and downside tail-values-at-risk (TVaR). These metrics are central in financial risk management, capturing the behavior of returns or losses in the extreme tails of a distribution. By framing these quantities within the integrated quantiles theory, the study paves the way for refined inference techniques that better quantify tail risks, which are often the most consequential for decision-making under uncertainty.

Moreover, the framework extends into the domain of economic inequality measures, addressing classic constructs such as the Lorenz and Gini curves. These curves are graphical and summary tools that elucidate the distribution of income or wealth across populations. The paper’s development of integrated quantile inference provides a rigorous mathematical underpinning that enhances the precision and reliability of these inequality measures, opening avenues for more accurate socioeconomic analyses grounded in robust statistics.

Crucially, the generality of the framework, underpinned by approximating sequences of cdfs, allows it to remain agnostic to specific data generation mechanisms. This robustness ensures that the theory can accommodate a wide gamut of practical scenarios, from independent sampling regimes to complex, dependent structures encountered in longitudinal financial data or panel datasets. Such universality distinguishes it from traditional inference methods that often require stringent assumptions that limit their empirical relevance.

The methodological advancements detailed in this study carry profound implications for the way quantile-based risk and inequality measures are understood and utilized. By delivering a unified nonparametric inferential structure, the authors provide researchers and practitioners with powerful tools to both confirm theoretical properties under broad conditions and execute reliable estimation in practice. This constitutes a significant leap forward in statistical theory with far-reaching consequences for economic and actuarial sciences.

Beyond its technical profundity, the study expresses important practical perspectives about the integration of theory and application. The presentation of results through simple random sampling models creates an accessible entry point for users seeking to apply the theory, while the discussion of dependent data settings anticipates the growing need for inference procedures that reflect complex real-world data dependencies. This dual emphasis facilitates translation from theoretical innovation to empirical adoption.

The researchers, including lead author Ričardas Zitikis of Western University in Canada, emphasize the comprehensive nature of their contribution, addressing longstanding obstacles in quantile inference. Their work disentangles many of the challenges that have historically restricted the statistical analysis of quantiles and integrated quantiles, clarifying the interplay between sampling design, dependence, and asymptotic properties.

As statistical inference continues to evolve in response to increasingly complex data environments, this study’s focus on nonparametric methods, free from overly restrictive assumptions, aligns well with contemporary needs. The capacity to handle integrated quantiles with general applicability advances the frontier in both theoretical statistics and applied domains that rely on precise risk assessment and inequality analysis.

In sum, this pioneering research lays foundational groundwork that has the potential to transform statistical inference for quantile-based functionals. Its methodological elegance, together with its practical generality, makes it a pivotal reference point for subsequent research and applications in fields where quantiles are indispensable. By illuminating the core principles that govern integrated quantile behavior under broad conditions, the study equips future inquiries with the conceptual and technical tools needed to navigate and unravel the complexities of order statistics in modern data analysis.

Subject of Research: Not applicable

Article Title: Fundamentals of non-parametric statistical inference for integrated quantiles

Web References: 10.1016/j.risk.2025.100026

Keywords: Economics, Finance, Insurance, Algorithms