In the realm of assistive technology, a groundbreaking approach has emerged that may significantly alter the landscape of communication for millions of deaf and hard-of-hearing individuals. Researchers from the College of Engineering and Computer Science at Florida Atlantic University have unveiled an innovative real-time American Sign Language (ASL) interpretation system, leveraging advanced deep learning algorithms and precise hand-point tracking. This technology promises to eliminate the long-standing barriers that have persisted in communication for this underserved community, integrating artificial intelligence (AI) in a manner that enhances accessibility and interaction.

The challenges faced by deaf individuals in communication often stem from the scarcity and high cost of traditional sign language interpreters, coupled with the practical unavailability of human-based solutions during everyday interactions. As the digital age continues to evolve, the urgency for smart technologies that can foster real-time, accurate, and accessible communication has grown significantly. The new ASL interpretation system emerges as a beacon of hope, capable of bridging this critical gap through the use of innovative AI technologies.

At the heart of this research lies the American Sign Language, a complex language composed of distinct hand gestures that symbolize letters, words, and phrases. Though existing ASL recognition systems have made strides, they often grapple with issues related to performance, accuracy, and robustness, particularly in diverse conditions. Misclassifications of visually similar gestures, like "A" and "T" or "M" and "N," remain a significant hurdle, hindering effective communication.

The resolution of these issues is crucial, given the complexities introduced by the availability and quality of datasets. Factors such as image resolution, motion blur, inconsistent lighting, and variability in hand sizes and skin tones play pivotal roles in shaping the model’s overall effectiveness. To counter these challenges, the research team at Florida Atlantic University employed a novel approach, harnessing the power of YOLOv11 for object detection combined with MediaPipe’s sophisticated hand tracking capabilities. This integration grants the system the ability to recognize ASL alphabet letters in real time with remarkable accuracy.

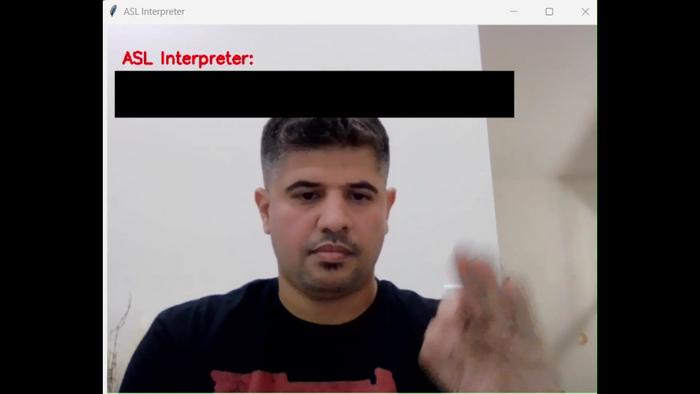

The real-time operation of this system relies on a standard webcam, which acts as a non-intrusive sensor to capture audiovisual data for analysis. This camera feeds live visual inputs into a sophisticated framework, whereby MediaPipe identifies 21 significant keypoints on each hand, creating a skeletal map that facilitates precise gesture recognition. YOLOv11, in tandem, utilizes these keypoints to detect and classify ASL letters, showcasing an ability to perform at a high standard even in fluctuating lighting environments and backgrounds.

Bader Alsharif, the first author of the study, provided insights into the system’s notable features. The entire recognition process—from gesture capture to classification—operates seamlessly in real-time, emphasizing its adaptability across various conditions. This capability underscores the system’s potential as a highly practical, accessible solution for real-world applications, emphasizing that it employs standard, readily available hardware.

The study, published in the esteemed journal "Sensors," presents compelling results showcasing the effectiveness of this ASL interpretation system. With an impressive accuracy rate of 98.2% (mean Average Precision, mAP@0.5), the model operates with minimal latency, highlighting its proficiency in delivering timely and reliable interpretations. This technology paves the way for various applications, notably in live video processing and interactive platforms where speed and accuracy are essential.

Central to the success of this innovative system is the extensive ASL Alphabet Hand Gesture Dataset, consisting of 130,000 images captured under diverse conditions. This dataset encompasses different lighting scenarios, intricate backgrounds, and a variety of hand angles and orientations, aiming to bolster model generalization. Every image in this dataset is meticulously annotated with 21 keypoints, marking essential hand structures such as fingertips, knuckles, and the wrist—crucial for distinguishing between gestures that appear visually similar.

Imad Mahgoub, co-author of the study, acknowledged the profound implications of such research. By fusing deep learning techniques with tracking of hand landmarks, the collaborative effort has resulted in a system of high accuracy and accessibility for daily use. This represents a significant milestone towards the development of inclusive communication technologies, with the potential to transform the experiences of the deaf and hard-of-hearing populations.

The deaf community in the United States, which encompasses approximately 11 million individuals or 3.6% of the total population, stands to benefit profoundly from these advancements. Moreover, the significant portion of American adults experiencing hearing difficulties—estimated at around 15%—further emphasizes the urgent need for effective communication tools. The advent of AI-driven interpretation systems presents a promising avenue for enhancing interactions across educational, workplace, healthcare, and social settings, effectively addressing the unique communication needs of this demographic.

As the research further progresses, the focus will shift towards enhancing the system’s capabilities. Future iterations aim to expand beyond recognizing individual ASL letters, striving to interpret entire ASL sentences. This evolution promises not just rapid communication but a more fluent exchange of thoughts and ideas, aiming to enrich the social fabric interlinking the deaf community with the broader society.

Stella Batalama, Dean of the College of Engineering and Computer Science, emphasized the transformative potential of real-time ASL recognition technologies. These innovations serve to strengthen the communication abilities of individuals within the deaf community and signify a commitment to fostering inclusivity. By bridging communication gaps, the AI-powered system can empower users to engage seamlessly with their social surroundings, facilitating introductions, navigation, and everyday discussions. This technology stands as a testament to the power of innovative design in enhancing accessibility, while concurrently promoting social integration.

The implications of this research extend far beyond technological advances; they symbolize a step toward a more connected and empathetic society. By embracing the potential of AI-driven communication solutions, the study exemplifies a commitment to equity and understanding, leveraging technology to create meaningful social interactions. The collective goal of the research team resonates throughout the deaf community and society at large, aiming to reduce barriers and promote a more inclusive and thriving world.

In summary, the introduction of this real-time ASL interpretation system heralds a new chapter in assistive technology aimed at enhancing communication for the deaf and hard-of-hearing community. By utilizing advanced techniques in deep learning and hand tracking, this system exemplifies how innovative approaches can pave the way for accessible solutions. As the research continues, the vision of translating full ASL sentences into text could soon manifest, further enriching the interactions and experiences of individuals across various social landscapes.

Subject of Research: Real-time American Sign Language interpretation

Article Title: Real-Time American Sign Language Interpretation Using Deep Learning and Keypoint Tracking

News Publication Date: 28-Mar-2025

Web References: Florida Atlantic University

References: DOI 10.3390/s25072138

Image Credits: Credit: Florida Atlantic University

Keywords: American Sign Language, Assistive Technology, Deep Learning, Real-time Interpretation, Communication Accessibility, AI Innovations, Deaf and Hard-of-Hearing, Gesture Recognition, Inclusivity, Social Integration.