A groundbreaking advancement in industrial anomaly detection has emerged from the research laboratories of Shibaura Institute of Technology (SIT), Japan, and FPT University, Vietnam. Spearheaded by Associate Professor Phan Xuan Tan, the newly developed framework, named MambaAlign, signifies a quantum leap toward improving the precision and reliability of multimodal sensor data fusion for quality inspection in manufacturing environments. This innovative approach tackles longstanding challenges associated with sensor misalignment and computational overhead, offering a promising avenue for robust, real-time defect detection across diverse industrial sectors.

Industrial quality inspection has traditionally depended on RGB cameras due to their speed and cost-efficiency. However, these systems frequently falter when tasked with identifying defects tied to subtle geometric deformations, thermal irregularities, or material inconsistencies—issues often obscured in conventional imaging. Supplementing RGB data with additional modalities like thermal imaging or depth sensing has proven beneficial, yet the integration of these heterogeneous data streams introduces substantial complexities. Existing multimodal fusion techniques are commonly plagued by loss of spatial detail, computational burden, or susceptibility to misalignments, challenges that are amplified in dynamic factory-floor settings.

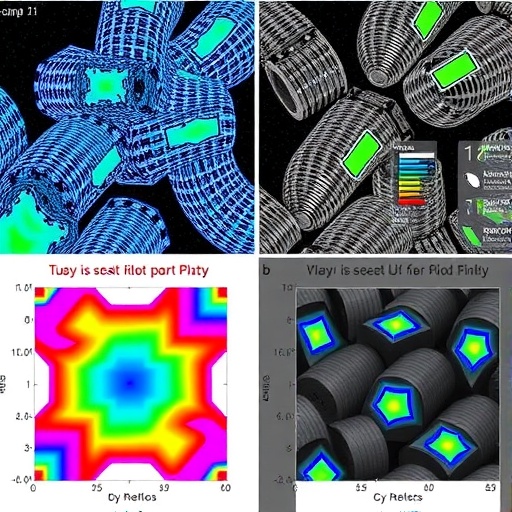

MambaAlign emerges as a robust solution that reconciles accuracy with computational efficiency. Central to its design is an alignment-aware state-space fusion mechanism capable of producing detailed and coherent anomaly maps despite modest sensor misregistration. Unlike heavily attention-based models that suffer quadratic scaling in computational cost, this framework harnesses state-space refinement to capture orientation-sensitive and long-range context vital for detecting thin or oblique defects, such as micro-cracks and scratches, while maintaining near-linear runtimes. This innovation ensures preservation of pixel-level localization which is critical for pinpointing subtle anomalies.

The methodology underlying MambaAlign employs cross-recurrence interactions at deep feature integration stages to exchange semantic guidance between modalities, effectively harmonizing spatial and semantic information. A novel top-down reconstruction process subsequently reconstitutes the low-level feature channels to sustain fine-grained localization accuracy. This unique architecture strikes a delicate balance between robustness and computational parsimony, allowing the system to tolerate real-world imperfections in sensor alignment without compromising detection fidelity.

Rigorous evaluation of MambaAlign was conducted across three diverse RGB-plus-auxiliary datasets, showcasing its superior performance relative to contemporary state-of-the-art approaches. Quantitatively, it achieved an average improvement of approximately 4.8% in image-level AUROC, 5.0% in pixel-level AUROC, and an impressive 6.5% boost in per-region overlap metrics. Such performance gains were realized without incurring substantial computational penalties; the model sustains throughput rates near 30 frames per second at moderate image resolutions, making it compatible with real-time industrial inspection workflows.

The practical implications of MambaAlign are profound. In the realm of electronics manufacturing, it enables the detection of micro-defects that could compromise circuit integrity, such as hairline fractures or missing components, by synergistically analyzing thermal and geometric data. Aerospace manufacturing benefits from enhanced visualization of subsurface delamination in composite materials, a defect type notoriously elusive to traditional RGB imaging. Automotive body assembly lines can leverage this technology to identify dents, scratches, and imperfect seams efficiently, reducing downstream rework and warranty claims.

Associate Professor Phan Xuan Tan highlights that MambaAlign not only delivers heightened accuracy but also sharp, contiguous anomaly delineation. This refinement minimizes false positives and negatives, thereby translating into fewer unnecessary interruptions and more actionable insights for process engineers. This is a crucial aspect for production environments where rapid decision-making and minimal downtime are paramount.

The technical sophistication of MambaAlign extends beyond its algorithmic novelty—it also embodies practical engineering principles rooted in real-world applicability. The system judiciously balances model size, runtime, and memory footprint, which are often bottlenecks in deploying deep learning models on factory floors. Its design facilitates integration with conveyor belt inspection lines and robotic vision systems, enabling inline defect detection that was previously unattainable with multimodal approaches.

From a broader perspective, this research underscores the pivotal role of AI-driven fusion methodologies in advancing the next generation of intelligent manufacturing systems. By surmounting the challenges of sensor alignment and maintaining computational viability, MambaAlign sets a precedent for future explorations into multimodal data synthesis. It forms a foundational step toward more adaptable, resilient inspection solutions capable of adapting to the unpredictable nuances inherent in industrial environments.

This innovation also aligns with the ongoing trend of integrating AI with classical engineering disciplines to foster smarter production ecosystems. The capacity to accurately and efficiently identify defects at early stages promises significant reductions in waste, improved product reliability, and higher operational efficiency. Stakeholders across the aerospace, automotive, electronics, and energy sectors stand to gain from adopting such transformative technologies.

Moreover, the framework’s emphasis on preserving localization precision without resorting to heavy attention mechanisms distinguishes it within the AI and computer vision community. The use of state-space recurrences to replace quadratic attention layers exemplifies a clever architectural choice that enables capturing orientation-aware context at scale. This insight broadens potential applications beyond industrial anomaly detection, potentially impacting other domains requiring fine-grained spatial analysis.

As implementation of multimodal inspection systems becomes increasingly prevalent, dealing with sensor misregistrations—whether caused by mechanical tolerance, environmental factors, or operational shifts—remains a critical hurdle. MambaAlign’s robustness to these modest misalignments ensures reliability without demanding costly recalibration routines or extensive manual interventions, thus fostering seamless adoption in existing production pipelines.

The fusion of this research’s technical depth with significant industrial applicability marks MambaAlign as a milestone in the convergence of AI, computer vision, and industrial engineering. It exemplifies a successful collaboration bridging academia and industry, targeting one of the most persistent challenges in modern manufacturing quality control. Looking forward, the principles introduced here may shepherd the development of increasingly autonomous, precise, and efficient inspection systems indispensable for the factories of the future.

Subject of Research:

Not applicable

Article Title:

MambaAlign: Alignment-aware state-space fusion for RGB-X industrial anomaly detection

News Publication Date:

1-Jan-2026

References:

DOI: 10.1093/jcde/qwaf143

Image Credits:

Dr. Phan Xuan Tan from Shibaura Institute of Technology, Japan, and Dr. Dinh-Cuong Hoang from FPT University, Vietnam

Keywords:

Engineering, Industrial engineering, Manufacturing, Electronics, Aerospace engineering, Quality control, Automotive engineering, Robotics